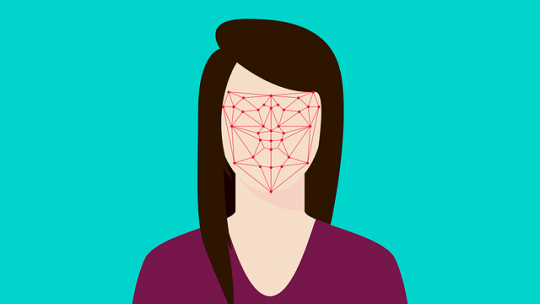

The Australian government-proposed Facial Matching technology risks racial bias, and may well have a chilling effect on the Right to Freedom of Assembly should it not be administered with further safeguards, says the Human Rights Law Centre, or HRLC.

This warning was contained within a submission to a parliamentary committee, which is examining the Coalition’s proposal that Home Affairs be able to collect, make use of, and disclose information about facial identification.

Overreach Warnings

The Facial Matching system was agreed to, in principle, by states in October last year, but has since seen warnings of overreach be issued from Victoria and the Australian Law Council. Concerned factions have warned that the Coalition’s Identity Matching Services Bill allows access to facial verification information by local governments and the private sector, and so could be used to prosecute low-level crimes.

In their submission to the Parliamentary Joint Committee on Intelligence and Security, the HRLC warned that the Bill was clearly, and dangerously, insufficient, and that the System was one of high risk since the bill failed to adequately identify or have systems in place to regulate the uses of Facial Matching technology.

Privacy issues are at the top of everyone’s minds these days, and, whether you are already using Facial Matching technology to sign in to your iPhone X and enjoy Australian sports betting or not, governments using it affects us all!

False Positives and False Negatives Likely to Arise Disproportionately

The centre submitted further that False Positive and Negative results for facial recognition are likely to occur disproportionately with relation to individuals who belong to Australian ethnic minorities. Studies were cited that found that Facial Recognition technology was biased towards the dominant ethnic group in the location where it is developed.

These kinds of False Positives could well subject suspects to unwarranted investigation, unlawful surveillance, and a denial of employment due to misidentification, all of which would serve to erode trust in law enforcement and security agencies. The centre has recommended that tests for accuracy be undertaken at least once a year, and that these should include demographic tests.

The use of this kind of technology could further pose a significant threat to Freedoms of Assembly, Association, and Expression, stated the submission. Facial Recognition technology runs the risk of turning public spaces into spheres in which each individual can be identified and then monitored, something of particular concern in the context of civic gatherings, protests, and demonstrations.

People May Not Exercise Their Right to Assembly

The HRLC has said that the use of Facial Recognition may well discourage people from freely exercising their Right to Assembly, because even innocent citizens who had done nothing unlawful may not want police to know that they had attended the kinds of politically sensitive gatherings that take place to protest police violence or Aboriginal deaths in custody, for example.

Politically motivated surveillance may not be a likely short-term consequence of the bill, said the HRLC, but it should be guarded against in the system’s design, and this bill should not be passed without the necessary safeguards in place to keep protecting Freedom of Expression.